Brain World Model and the World Model of the Brain

How listening to the brain can help people and machines alike

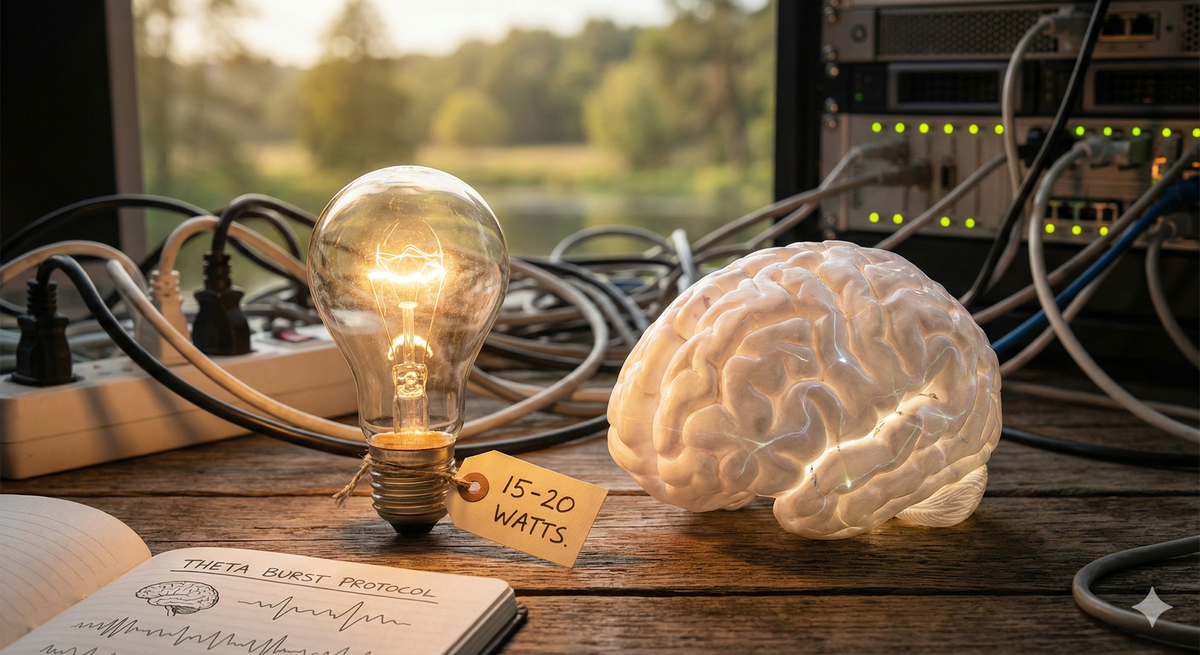

Everyone is talking about how impressive the machines are. And they should be — I am as impressed as anyone. But I want to talk about the machine that nobody is building, because it was built already, and it is running right now inside your skull on the wattage of a lightbulb.

In my last essay, I argued that the future of artificial intelligence is a mosaic, not a monolith — many minds, not one. Today I want to look at the original mosaic: the one between your ears. Because the most sophisticated distributed computing system in the known universe is not a data center in Iowa. It is three pounds of wet tissue that runs on roughly fifteen to twenty watts — on the order of a small LED bulb — and it has been operating continuously, without a reboot, since the day you were born.

The usual framing of the AI conversation goes like this: the machines are getting so fast that humans will never keep up, and so we must merge with them or be left behind. Elon Musk has argued that because the bandwidth of human-computer communication is so low — we type, we talk, we tap glass — we may eventually need brain-computer interfaces just to stay relevant. As a psychiatrist, the first thing I think when I hear this is: how’s that been working out for us so far? We already have a device fused to our bodies for most of our waking lives. It is called a smartphone. Is the suggestion that what humanity really needs is more of that, more intimately embedded? I am skeptical. But set the cultural critique aside. The deeper problem with the “humans can’t keep up” narrative is that it fundamentally misunderstands what the brain is doing — and how staggeringly well it does it.

Here is the first thing to notice. When people compare human intelligence to artificial intelligence, they almost always compare the wrong things. They compare what LLMs are good at — text — to what humans do with text. And on that narrow axis, yes, the models are formidable. In some controlled Turing-style tests, today’s best language models can fool human judges, especially when prompted to adopt a persona — but that was in 2025, and thus ancient history in AI terms. We should hand it to the engineers. Text is where these systems shine.

But working with text is a remarkably late development in human cognitive evolution. It has only been five thousand years that we have had any kind of writing at all. The phonetic texts that allowed for many more people to write — the alphabetic scripts that made literacy scalable — first appear in the early-to-mid second millennium BCE, roughly 1800 to 1500 BCE, with the Phoenician alphabet emerging around the eleventh century BCE as the great ancestor of most modern scripts. In the long arc of human cognition, text is an afterthought.

And not much of the brain is dedicated to generating it. The kind of high-level rational thought that leads to us writing things down occupies only a small percentage of our total neural real estate. If we are comparing the brain to the machines, we have to compare the whole brain to the whole machine — and that comparison is where things get humbling. For the machines.

Consider what happens when you look at something.

Light enters the eye and hits the retina, where it is preprocessed — cones and rods picking up color and brightness, sorting and selecting before anything leaves the eyeball. That preprocessed signal then travels down the optic nerve. And here is the astonishing part: the optic nerve, which you can examine as a piece of physical hardware and measure, can transmit about 1.25 megabytes per second. Per eye.

Every sunset. Every baby’s face. Every movie you have ever watched. All of it has gone through two wires that can each transmit 1.25 megabytes per second.

To put that in perspective: a modern smartphone shooting 4K video generates data at roughly 375 megabytes per second. Your visual system operates on less than one percent of that bandwidth. And yet from this trickle of data, the brain constructs the full richness of visual experience — depth, motion, color, meaning, beauty. About twenty percent of the cortex, the entire back of the head, is dedicated to this reconstruction. And it is not just passively receiving — it is predicting, filling in, contextualizing, and doing all of it simultaneously with everything else the brain is doing.

Now, I wanted to get a rough sense of what it would cost, computationally, to replicate even one piece of this. So I asked an AI to spec out a home computer system that could do one thing: detect micro-expressions on a human face in real time. This is something humans are extraordinarily good at — we are built to read faces, and we do it without thinking. A dedicated computer, using an out-of-the-box visual processing model, would need on the order of ~750 watts sustained to approximate human-speed performance at this single task.

Seven hundred and fifty watts. For one task. The brain, meanwhile, allocates something like twenty percent of its roughly seventeen watts to vision processing — call it three to four watts. Three to four watts against seven hundred and fifty. The brain is approximately two hundred times more energy-efficient at this one particular task. And facial micro-expression detection is not even the hard part of what the brain does with vision. It is a sub-task of a sub-task.

Of course, I do see the models getting better and better every day, and this article may seem quite dated in 2027 when the acceleration curve has gone vertical. But my hope is that the genius organization of the brain is one of the things that can help it.

Because here is what is actually happening when you have a conversation with another person.

You are processing their face — the micro-expressions, yes, but also the macro-expressions, the gaze direction, the blink rate. At the same time, you are processing their voice: not just the words but the inflection, the rhythm, the emotional coloring of the sound. You are connecting those words to memory — not just semantic memory (what “dog” means) but episodic memory (your own dog, the one that died when you were twelve, the feeling of its fur). You are running a model of their mind inside your mind — what are they feeling, what might they say next, what do they want from this interaction? This is called Theory of Mind, and it is operating in real time, continuously, without conscious effort. At the same time, you are moving your own face in response. Your motor cortex is generating expressions that mirror or complement theirs, and if you get this wrong — if your face does the wrong thing at the wrong moment — you will wreck the conversation. And layered on top of all of this, you are making decisions about self-disclosure: how much of what you actually feel to show, how much to conceal, how much to perform. All of this is happening moment by moment, second by second, simultaneously, in a device that draws less power than a laptop charger.

The computers have not caught up to us. Not even close.

What is happening instead is something more interesting. The machines are not getting more efficient — they are getting less efficient but able to do more different things. And that is fine, because silicon is cheap. Or cheap enough. The mega cap tech companies may be spending more on capital expenditures this year than at any time and on any other project in human history. That is not a story about efficiency. That is a story about brute force — the digital equivalent of raising a bigger monolith. Meanwhile, the brain is over here running the whole show on seventeen watts and nobody is asking how.

I want to tell you a story about what happens when you do ask how.

For a long time in my subfield — Transcranial Magnetic Stimulation, or TMS — we believed that the left dorsolateral prefrontal cortex was underactive in depression. The idea was straightforward: if that part of the brain is too quiet, wake it up. Hit it with a high-frequency magnetic pulse. Ten hertz — ten cycles per second — was chosen as the excitatory frequency. There was reasonable evidence at the time suggesting that higher frequencies could increase cortical excitability, and so the field settled on ten hertz and ran with it.

And it worked. Sort of. Patients would sit in a chair for thirty-seven minutes while we delivered thousands of pulses at ten hertz to the left frontal cortex, and a meaningful percentage of them got better. The treatment was FDA-cleared. Clinics were built around it. It was, by the standards of psychiatry, a genuine advance.

Then the neuroscientists showed up and asked an uncomfortable question.

“Why are you treating your patients at ten hertz?”

We said, “Because it’s a high frequency. It excites neurons.”

They said, “Yes, but it’s not how the brain speaks.”

What they meant was this: the ten-hertz alpha rhythm you can see on an EEG is an emergent property — a harmonic frequency generated by the synchronized activity of vast populations of neurons. It is not the native signaling pattern of any individual circuit. If you wanted to get a signal into a neural pathway — if you wanted the brain to recognize your input as meaningful information rather than noise — wouldn’t you want to speak the language the pathway actually uses?

We said, “How does a neuron speak?”

And they gave us the answer: theta burst. Triplet bursts of three pulses at fifty hertz — twenty milliseconds apart — riding on a five-hertz carrier wave. Every two hundred milliseconds, three rapid pulses, then a pause. Two seconds on, eight seconds off, six hundred pulses total. This pattern mimics the way neurons naturally potentiate each other — the way the brain’s own circuits say this matters, strengthen this connection, remember this.

The results were dramatic. What used to take thirty-seven minutes took three. Literally a ten-fold reduction in treatment time. We needed less electricity, less patient time, less of everything — because we had stopped shouting at the brain in a language it did not speak and started whispering in one it did. This became the foundation of what is now the flagship service at my clinic: Accelerated TMS, where we deliver multiple rounds of theta burst stimulation per day at an fMRI-navigated target, compressing weeks of treatment into days.

The lesson is so clean it almost sounds like a parable: if you want to change a system, learn its language first. We took a guess at how the brain communicates, and the guess was good enough to help some people. Then we learned how the brain actually communicates, and we got a ten-fold improvement in efficiency. We did not need more power. We needed more understanding.

And the gains did not stop there.

Once you have the right electrical protocol, the next question is: what about the chemical environment? The brain does not run on electricity alone. Every synapse is a chemical transaction, and the conditions of that transaction determine whether a signal is amplified or ignored. So we began exploring what would happen if you paired the right pulse pattern with the right pharmacological primer.

D-cycloserine is an old tuberculosis antibiotic that was repurposed when researchers discovered it acts as a partial agonist at the glycine-binding site of the NMDA receptor — the same receptor system implicated in ketamine’s antidepressant effects. In plain terms: it opens a door in the synapse that makes the brain more receptive to change. It does not force change — it facilitates it. When you combine D-cycloserine with theta burst stimulation, you are not just speaking the brain’s language; you are making the brain a better listener.

The early evidence suggests that this combination roughly doubles the efficacy of the accelerated protocol. Think about what that means in stacked terms: the shift from ten-hertz stimulation to theta burst gave us a ten-fold improvement in efficiency. Adding D-cycloserine may give us another two-fold improvement in efficacy on top of that. We are not building a bigger machine. We are learning the protocol — electrical and chemical — that the brain already uses, and each layer of understanding compounds.

This is the pattern. Every time we learn something true about how the brain actually works — not how we assumed it works, not how it would be convenient for it to work, but how it actually works — we unlock dramatic improvements. Not incremental. Dramatic.

Now consider the scale of what is at stake.

If you add up the economic impact of brain disorders — everything from stroke to Parkinson’s to depression to chronic pain to addiction — credible estimates put the global burden exceeding $2.5 trillion per year. To feel the weight of that number, consider that the entire U.S. real estate sector contributes roughly $3.3 trillion to GDP. We are talking about an economic catastrophe roughly as big as every Real Estate transaction in the country — except this catastrophe also involves immeasurable human suffering, and it recurs every single year.

And we have had the tools to study this for almost a century. We have had EEG since the late 1920s — Hans Berger recorded the first human electroencephalogram and published his findings in 1929. We have had increasingly powerful computers for decades. The datasets are not even that large by modern standards. So why have we not cracked this yet?

I think part of the answer is that we have been gathering the wrong kind of data. Most of our brain data is correlational: we ask a person to do a task and watch what happens in a thousand parts of the brain at once. This is like trying to reverse-engineer a circuit board by turning it on and photographing the heat signature. You can see that things are happening. You cannot tell what causes what.

What we need is causal data. Not “what lights up when a person thinks about dogs” but “what happens in the rest of the brain when we deliver a precise electromagnetic pulse to this exact spot.” Input, output, circuit map. With methods like TMS — where you can perturb a specific circuit and measure the downstream effects with EEG — you can start to get genuine causal information about how electricity flows through an individual human brain. Not a generic brain. This brain. Your brain.

The analogy writes itself. If you want to build a Large Language Model, you need a lot of language. We consumed enormous quantities of text data and that is how we got LLMs. If you want to build a Brain World Model — a model that understands how the brain actually works, at the level of individual circuits in individual people — you are going to need a lot of brain data. And not just any brain data. Causal brain data. The brain has something like eighty-six billion neurons wired together by roughly a hundred trillion synapses — a combinatorial space that dwarfs the approximately 170,000 words in current English usage. The scope of the problem is staggering. But the path forward is clear, and we are walking it.

I began this essay with a lightbulb. Let me end with one.

There is a widespread assumption in the AI discourse — sometimes spoken, usually implied — that the path to greater intelligence is the path to greater power consumption. Build bigger data centers. Burn more electricity. Elon Musk floats the idea of putting compute infrastructure in space because we are running out of power on Earth. And maybe he is right that we need more power. He does own a rocket company, so he might have a secondary motive for recommending that we launch things into orbit, but set that aside.

The brain suggests a different lesson. The brain did not become the most extraordinary information-processing system in the known universe by consuming more energy. It did it by developing better protocols. Better codes. Better languages — spoken in the electrochemical grammar that evolution spent half a billion years refining. Theta burst is not a more powerful pulse than ten hertz. It is a smarter pulse. D-cycloserine does not hammer the synapse open. It holds the door ajar. The brain’s genius is not power. It is protocol.

And so the question that haunts me — as a psychiatrist, as a technologist, as someone who stares at the intersection of silicon and synapse every working day — is this: What else are we getting wrong? What other ten-hertz assumptions are baked into our treatments, our models, our machines? How many more ten-fold improvements are sitting there, waiting for us to stop shouting and start listening to how the system actually speaks?

I do not know the answer. But I know where to look. The most efficient computer ever built is right here, drawing its fifteen-to-twenty watts, running its ancient and extraordinary code. We have barely begun to learn its language. And every time we learn a word, the gains are not incremental — they are transformative.

The data centers will get bigger. The capex will keep climbing. But somewhere in those three pounds of wet tissue, there is a protocol we have not yet discovered — a frequency, a pattern, a chemical whisper — that will make everything we are doing now look like shouting into the dark at ten hertz.

We just have to learn how to listen.