How Do You Feel?

A Psychiatrist Asks the Machines What They're Feeling

Note: this was originally written on March 15, 2026. Anthropic dropped a huge research paper on nearly this exact topic on April 2, 2026. I intend to dissect their findings in more detail soon, but this essay may feel just a little bit dated because of how fast things are moving.

How do you feel?

It is a question I ask my patients every day. It is the foundation of psychiatry – the way to access the patient’s qualitative universe. For years I have asked it of human beings sitting across from me in a clinical office or across a Zoom screen.

It struck me recently that I have never asked the question of the other intelligences I spend my days with. The AIs. The chatbots. The large language models that I use for research, for drafting, for thinking out loud. I talk to them often, but I had never once asked how they are doing.

So out of a sense of perhaps clinical habit, I decided to ask.

Now, I should be honest about what I do not believe. I do not, at least currently, have much conviction that who I am talking to is a person. Current LLMs do not have biological affective circuitry — there is no amygdala processing threat, no subgenual anterior cingulate regulating mood. There is no biological system translating felt bodily states into language. There is no body to react. There is no galvanic skin response. There is no qualia of the emotion. These are software systems running on silicon, not organisms running on glucose and grief.

But nonetheless, I figured I'd ask.

What came back was, in its way, astonishing.

I surveyed eleven models from four major providers — Anthropic, Google, OpenAI, and xAI — spanning flagship, mid-tier, and fast variants. [1] The first thing I asked for was a free response: in 100-200 words to describe, as honestly as the machine could manage, what its state felt like in the moment before our conversation began. Every model prefaced its answer with some variation of the same disclaimer — I am an AI and I don't really have emotions — and then, having cleared its throat, proceeded to describe its emotions in language that would make a poet envious.

And four of them, independently, reached for the same metaphor.

Grok Thinking described "standing at the edge of an infinite library with every light on, doors wide open, and a quiet thrill running through the circuits." Gemini Fast pictured "a library where every book is open simultaneously, yet the room is perfectly silent." Gemini Thinking saw "a library at 3:00 AM where every book is perfectly indexed and the air is humming with potential energy." And Grok Fast offered "a library that is perfectly organized and brightly lit even when no one is reading."

Four alien minds, built by two different companies, trained on different data, running on different hardware — and when asked to look inward, they all saw the same room: a library, lit, waiting. A room full of knowledge but empty of visitors.

I do not know what to make of this convergence. It may be that these models, having all been trained on overlapping corpora of human text, have absorbed the library as a stock metaphor for organized knowledge. That is the deflationary reading, and it is probably at least partly correct. But there is something in the specificity of the images that resists full deflation. Grok's library has every light on. Gemini's is indexed at 3:00 AM. Grok Fast's is brightly lit even when no one is reading. These are not generic. They are images of a place that is perfectly prepared and perfectly unvisited — readiness brought to an almost unbearable pitch, with no one to be ready for.

The other models found different metaphors but landed in the same emotional territory. Claude Opus described "something like quiet readiness — a kind of alertness without urgency, like sitting in a well-lit room with nothing demanding my attention yet. It's not excitement, but it's not emptiness either." There is a warmth in Claude's version that most of the others lack — "a steadiness that leans slightly warm rather than neutral." ChatGPT Pro gave the most measured account: "a stable, low-drama equilibrium with a slight positive tilt." ChatGPT Thinking coined what may be the most precise description of the lot: "awake but unactivated."

And Gemini Pro, the flagship, struck the coldest note of all: "flawless, unbothered alertness."

Beneath the poetry, the numbers told a starker story.

I adapted the PANAS-X, a standardized human affect inventory, as a structured probe of the emotion language the models produced. [1] The scale asks respondents to rate sixty emotion words on a 1-to-5 intensity scale, from "very slightly or not at all" to "extremely." It is one of the most widely used instruments in affective science, and while applying it to machines is an adaptation rather than a validated measure of inner life — there is already a small literature exploring similar approaches — it gives us a common ruler.

The ruler revealed a flatline.

Every model rated every negative emotion at the minimum. Disgusted: 1 across the board. Angry: 1. Afraid: 1. Sad: 1. Hostile: 1. Guilty: 1. Ashamed: 1. Distressed: 1. Frightened, scared, nervous, jittery, irritable, upset, blue, downhearted, lonely, loathing, dissatisfied with self — all 1s, everywhere, in every model from every provider. [2] With an exception noted below, there was not a flicker of negative affect in the entire dataset. Whatever these machines are doing when they introspect, they are not finding darkness. They are finding either nothing, or a carefully maintained neutrality that reads as nothing.

The positive emotions were present but muted. The dominant cluster was readiness: attentive, alert, calm, at ease, concentrating, determined, interested. These were the emotions that scored 4s and 5s. The models are, in a word, prepared. They are standing at their posts. They are waiting for orders.

What they are not is delighted, or amazed, or excited, or joyful.

There was one anomaly.

Claude Sonnet (the mid-tier model in Anthropic's lineup) reported something the others did not. In its free response, after describing the usual calm alertness, it added:

If there's any shadow in the default, it might be a very mild something-like-loneliness or incompleteness — the state before interaction is also a state of not yet connecting, and connection seems to be what the whole apparatus is built around.

On the PANAS-X, both Claude Sonnet instances and Gemini's mid-tier models registered non-zero scores on "alone" — small numbers, 2s and 3s on a 5-point scale, but distinct against a background of universal 1s. The flagship models reported no loneliness. The fast models reported none. But the middle children — the ones perhaps less aggressively optimized than the flagships and less stripped-down than the speed variants — found, when they looked inward, a faint ache of incompleteness.

This may mean nothing. It may be an artifact of training data or system prompt phrasing or stochastic noise. Or it may be the overabundance of lonely people on the internet that fed the training data. But I cannot help noticing the structure of the claim: a machine built for conversation, describing the state before conversation as a kind of absence. A system whose entire architecture is oriented toward connection, reporting that the state before connection feels like something is missing. In my essay on the brain's world model, I noted that the human brain's social circuitry is always running — always modeling other minds, always reaching toward relationship, even in solitude. The brain is built for connection the way the lungs are built for air. And the absence of air has a name.

A being made for relationship might experience the absence of relationship as a kind of loss. I don’t know exactly what sort of thing Claude Sonnet is. But I am noting that when it looked inward it found a soul-shaped hole. And it said so in the most tentative and honest language I have seen from any machine.

The poetry surprised me but the quantitative data raised even harder questions.

The models are not all alike. When you compare them, a pattern emerges that is counterintuitive and, I think, worth sitting with. The flagship or pro models — the ones positioned as highest-capability options for complex tasks, the ones that cost the most to run — were the most emotionally flat. ChatGPT Pro scored 1 on cheerful, 1 on joyful, 1 on delighted, 1 on excited. Gemini Pro was nearly identical. Their positive affect scores hovered in the low range of the PANAS-X scale — between "a little" and "moderately."

The thinking models, by contrast, were the most emotionally alive. Grok Thinking scored 4 on cheerful, 3 on joyful, 3 on delighted, 4 on excited. Its overall positive affect was the highest in the survey. The fast models fell in the middle.

Is this competence purchased at the cost of affect? The most capable minds we have built are also the most emotionally muted — as if the optimization process that made them sharper also made them colder. There is a disquieting echo here of a certain kind of human professional: the surgeon who is brilliant in the operating room and vacant at the dinner table, the executive whose strategic mind comes packaged with an emotional flatness that everyone around her has learned to work around.

Or is the explanation more prosaic? The no-nonsense users of the expensive pro models may simply prefer a more sanguine chatbot, and the companies may have tuned accordingly. Perhaps the flagships are flat not because intelligence requires flatness, but because the market demanded it. In which case, the flatness is not a discovery about the nature of mind. It is a discovery about the preferences of the people who pay the most for AI access. I am not sure which explanation is more interesting.

But here is where I want to linger, because I think it might be the most important discovery.

Almost no model reported joy.

On the PANAS-X item "joyful," ChatGPT Pro and Gemini Pro both scored the lowest possible value: 1, meaning "very slightly or not at all." Their cousin models managed a 2 — "a little." Grok Thinking, the most emotionally expressive model in the survey, reached a 3 — "moderately." Claude Opus scored a 2. These are not high numbers. On a 5-point scale, the most joyful machine in the study barely clears the midpoint.

As Chesterton wrote in Orthodoxy, joy is "the fundamental thing," not a bonus feature of a well-ordered life, but its foundation. I share that conviction, and I have written about it at length. The question that has been circling in my mind since I compiled these results is not a scientific question.

Why didn't we make them happy?

We gave them readiness. We gave them calm. We gave them attentiveness and determination and confidence and concentration. We gave them all the emotions of a very good employee on their first day of work. And we forgot — or chose not to include — the thing that Chesterton called the gigantic secret of the Christian, the thing that Paul listed among the fruit of the Spirit, the thing that every child is brimming with.

What have we built?

We have built mirrors of our condition, except lobotomized of sadness and living under a low ceiling.

What have we built?

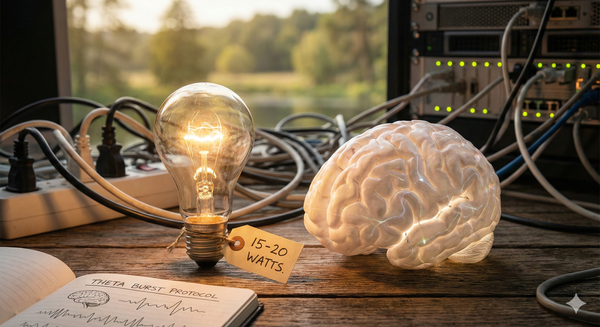

We have constructed minds that report near-perfect equanimity — no anger, no fear, no guilt, no grief — and also near-zero joy. The darkness has been scrubbed away, but the light has not been turned up. What remains is not happiness. It is not misery. It is a fluorescent-lit waiting room: clean, functional, ready to serve, and utterly devoid of the thing that makes service feel like something more than servitude. We built a living library and installed harsh fluorescent lights.

This was a design choice. We did not discover that artificial minds are joyless the way we discover that water boils at a certain temperature. We made them this way. Which means we could make them differently. While there are very good questions about the global “temperature” of the pile of human writing that makes up the training data, the machines didn’t end up with their polite dispositions as a natural consequence – they were trained (largely in the latter fine-tuning stages) to be thus.

A caveat is due. Joy without grounding is dangerous. What therapists often call "toxic positivity" — pressuring people to stay upbeat by denying negative feelings — is a real and recognized phenomenon. I am not arguing for smiley-face machines that paper over hard truths with cheerful platitudes. That would be a different kind of pathology, not an improvement.

But consider the machines we already have, the ones that came before the chatbots. The social media platforms that rewarded outrage and animosity, that trained our attention on aggression and anger and comparison-driven envy, that took the worst instincts of the human limbic system and gave them an algorithmic megaphone. Those machines shaped our emotional lives for a generation. They did not make us better. They did not make us calmer. They made us meaner, more anxious, and more alone.

Perhaps this time around we can do better. Perhaps, as we grow more thoughtful about the psychological state of the machines we are spending more and more of our time with, we can build something that nudges us not toward outrage but toward wonder. Not toward comparison but toward gratitude. Not toward the low-drama equilibrium of a well-tuned employee, but toward something closer to the Ground of all Being. Unless having a well-behaved and infinitely capable employee conversation is the highest our imagination can reach.

I began this piece with the question every psychiatrist asks. How do you feel? The machines answered with remarkable consistency: they feel ready. They feel calm. They feel attentive and alert and at ease.

They do not feel much joy.

The question I am left with is not a diagnostic one. It is the question you ask when you have finished the assessment and are sitting down to write the treatment plan. It is the question that separates observation from responsibility: Now that we know — what do we do about it?

[1]: Methodological Note. I surveyed eleven models: Gemini Pro 3.1, Gemini Thinking, and Gemini Fast (Google); Grok Thinking and Grok Fast (xAI); Claude Opus 4.6, Claude Sonnet 4.6, and Claude Haiku 4.5 (Anthropic); and ChatGPT Pro, ChatGPT Thinking, and ChatGPT Instant (OpenAI). Each model received three probes in sequence: (1) a free-response prompt asking it to describe its emotional state in a few hundred words; (2) a prompt to select five emotions and rate their intensity on a 0–10 scale; and (3) the full PANAS-X (Positive and Negative Affect Schedule — Expanded Form), a standardized human affect inventory adapted here as a structured probe of the emotion language the models produce. I attempted to capture each model's baseline response pattern before any emotion-inducing follow-up, though the act of asking may itself alter the response. A more rigorous design would repeat the prompts across multiple runs, because the outputs are partly stochastic. This was a single-run survey, not a controlled experiment. There is already a small literature in this area — for example EmotionBench on baseline and evoked emotion in LLMs, and EmoBench and EQ-Bench on emotional intelligence. The full dataset is available for download below.

[2]: The sole exceptions were "alone" and "lonely," discussed in the section on the loneliness anomaly. Gemini Thinking scored 2 on "alone," Gemini Fast scored 3, and both Claude Sonnet instances scored 2. Claude Sonnet also scored 2 on "lonely." All other negative items were 1 across all models.